こちらのリンクにてDick Lyonのポスト履歴が確認できる. Quattro関連のポストでそれなりの長さの3件を訳した. 他は数行のごく短いものであったり, 単なる論文へのポインタであったりするのでそれらは直接元記事を参照のこと. ちなみに下から上へ向かってポストが古いものから新しいものという順でリストされている.

いささか私の理解が怪しい部分も残る(フーリエ変換云々のあたり)ので本ポストは対訳形式をとり, 英文を併せて掲載する. 不明な点はオリジナル英文を正とし各個での解釈をお任せしたい.

(1) http://www.dpreview.com/forums/post/53098094

Laurence,

ローレンス,

Checking dpreview after hearing the news, I was amused to see that not much has changed in recent years.

発表のニュースを聞いた後dpreviewをチェックし, Quattro センサーはここ数年であまり大きく変化していないのだと分かってなんだか愉快な気持になってしまった.

Your Shakespearean description is not bad. The collected electrons are exactly the corpses of absorbed photons, which is the point that most people who talk about the filtering miss: absorption and filtering and detection being the same event.

君のシェイクスピア風の記述はイイね. 集められた電子は確かに, 吸収された光子の成れの果てというところだ. これが多くの人たちが話題にしている"filtering miss"についてのポイント: 吸収とフィルタリングと検知は全て同じ事象である, だ.

But the "stronger" and "weaker" is not quite right. The high-frequency blue photons are stronger (highest energy); but the way they interact with silicon makes them get absorbed soonest, near the surface. The lowest energy photons, in the infrared, penetrate deep. Low frequencies, at wavelengths greater than 1100 nm, where the photons are quite weak, are not able to kick electrons out of the silicon lattice at all, so the silicon is completely transparent to them. In between, there's a nicely graded rate of absorption.

しかし"stronger"(強力)と"weaker"(微弱)という説明は全く正しくない. 高周波数の青光子は"より強い(stronger)" (最も高いエネルギーを持つ); しかしシリコンと接触すると, 青光子が最も早い段階, つまり表面近くで吸収される. 最も低いエネルギーしか持たない光子, つまり赤外光はシリコン内部に深く入り込む. 波長1100nm以上の低周波数帯では光子の力はきわめて弱く, シリコン格子から電子をはじき出すことは全く出来ない. それ故シリコンはそれらに対して完全に透過的だ. その中間帯での吸収率はなめらかで段階的なものになっている.

Understanding the spectral responses of the layers starts with understanding that at any wavelength, the light intensity decreases exponentially with depth in the silicon. I think I've written about this some place... Anyway, the top layer is not white, not luminance, not blue, but a sort of panchromatic blueish that turns out to work well enough for getting a high-frequency luminance signal. We did a lot of experiments and amazed ourselves how "well enough" the 1:1:4 worked; it was not obviously going to be a good thing, but turned out awesome.

各レイヤのスペクトル応答性(spectral responses)を知ることは, つまり次のことを理解することから始まる. どの周波数においても光度(light intensity)は, シリコン中の深度に対し指数的に減衰する. たぶんどこかでこのことについて書いたと思うんだけど... ともかく, トップレイヤーは白色ではない, Luminance (輝度)ではない, 青色ではない, なんというかある種の青みががったパンクロマティックで, こいつは高周波数のLuminance信号を取るのに十分だという結果が得られた. 僕たちは数多くの実験を行い, 1:1:4構造がこんなにもうまく動作することに驚かされた; 1:1:4というアイデアは最初から良い結果が得られるだろうと思えるものではなかったんだ. けど結果的には, スンバらしいということが分かった.

We had 1:1:4 sensors working, including very efficient and effective hardware and software processing pipelines, before I left Foveon back in '06, but the sensors didn't yet have the low-noise fully-depleted top-layer photodiodes of the "Merrill" cameras, and we were only targeting these for cell phones at the time. I expect it will be a killer combination: fully-depleted blue plus 1:1:4. I don't think the red and green are fully depleted, too; that was thought to be somewhere between hard and impossible, which is why they don't have the same low read noise, and one reason why aggregating red and green quads this way is a big win.

僕たちは1:1:4センサーを実稼動させた. これは非常に効率の良いハードウエアとソフトウエアの演算パイプラインを含む. 僕が'06にFoveonを去る前の話だ. でもこの時点でセンサーはまだ, "Merrill"カメラのような, 低ノイズの完全空乏型(fully-depleted) フォトダイオードをトップレイヤーに搭載していなかった. また僕らは当時携帯電話のみをターゲットとしていた. 僕はこれはキラー・コンビネーションになると思う: '完全空乏型青 + 1:1:4'. 僕は緑と赤も同じように完全空乏になるとは考えていない; 難しいと不可能の間, といったところだろう. そしてこのことが, ミドル・ボトムレイヤーではトップレイヤーと同程度の低い読み込みノイズは得られない理由であり, また赤および緑を4個1セットに結合させることがこの場合非常に大きな利益をもたらすとする根拠でもある.

But understanding how it compares to the classic Foveon and to Bayer will keep many people busy for a long time. Something for dpreviewers to do while waiting for cameras, and something to keep the marketing people tied up... Should be fun.

しかしどのようにして1:1:4構造のセンサーを既存のFoveonセンサーと, またベイヤーと比較できるのかが分かるまで, 長い間多くの人を多忙にさせそうだ. カメラが発売されるのを待つしばしの間, dpreviewerではアンナコト, またマーケティングの人たちをかかりっきりにするソンナコト, 色々あることだろう. 楽しそうじゃないか.

Dick

--- (1) POST ココマデ

(2) http://www.dpreview.com/forums/post/53099508

Kendall, long time...

Kendall, 久しぶり...

You're right that there won't be much aliasing. A lot of people seem to have the idea that aliasing has something to do with different sampling positions or density, as in Bayer. But that's not the key issue. The problem with Bayer is that the red plane (for example) can never have more than 25% effective fill factor, because the sampling aperture is only half the size, in each direction, of the sample spacing. If you take the Fourier transform of that half-size aperture, you'll find it doesn't do much smoothing, so the response is still quite too high way past the Nyquist frequency. That's why it needs an anti-aliasing filter to do extra blurring. But if the AA filter is strong enough to remove all the aliasing in red, it also throws away the extra resolution that having twice as many green samples is supposed to give. It's a tough tradeoff.

深刻なエイリアシングは起きないだろうという君の意見は正しい. ベイヤーのように, 異なるサンプリング位置と密度がエイリアシングと関連性を持つ, そう多くの人が考えているようだ. しかしそれは懸念事項ではない. ベイヤーの問題は, (例えば)赤プレーンは絶対に25%以上の実効フィル・ファクター(曲線因子, effective fill-factor)を得ることはできない, なぜならサンプリング径(sampling aperture)はいずれの次元においても, サンプル間隔のたった半分のサイズだから, ということだ. もしそのハーフサイズ径でフーリエ変換を施しても, あまり良くスムージング(smoothing)しないことが分かる. だから得られた結果はナイキスト周波数の高い方に行過ぎてしまっている. 結果更にボカシを入れるためアンチ・エイリアシング・フィルターが必要になるのだ. しかしもしもAA (アンチエイリアシング)フィルターが, 赤での全エイリアシングを除去するのに十分強力であったとすると, 更に解像度を犠牲にすることになる. これは緑サンプルが提供する解像度の2倍であったものだ. 厳しいトレードオフだと思う.

In the Foveon sensor, the reason no AA filter is needed is not because of where the samples are, or what the different spatial sampling densities are. It's because each sample is through an aperture of nearly 100% fill factor, that is, as wide each way as the sample pitch. The Fourier transform of this aperture has a null at the spatial frequencies that would alias to low frequencies; this combined with a tiny bit more blur from the lens psf is plenty to keep aliasing to a usually invisible level, while keeping the image sharp and high-res.

FoveonセンサーでAAフィルターが必要ないのは, サンプルがどこに位置しているのか, 空間サンプリング密度の違いは何か, というようなことに拠るものではない. 各サンプルが, ほぼ100%のフィルファクター(曲線因子, fill factor)を持つ全サンプル径から採られたものだからだ. つまり, どちらの次元方向にもサンプル・ピッチと同じだけの幅を持つ. 低周波数帯でエイリアシングを発生させる可能性のある空間周波数にて, この全サンプル径へフーリエ変換を施すとnullとなる; この結果は レンズpsf (Point spread function 点拡がり関数) 由来のより大きなボケと混ぜ合わさり, エイリアシングを気づかないレベルにとどめるに十分だ. 一方画像をシャープで高解像度に保つ.

In the 1:1:4 arrangement, each sample layer has this property, but at different rates -- very unlike the Bayer's red and blue planes. The large area of the lower-level pixels is the ideal anti-aliasing filter for those layers; the top layer is not compromised by the extra spatial blurring in the lower layers, so it provides the extra high frequencies needed to make a full-res image.

1:1:4配置において, 各サンプルレイヤーはこのような特徴を持つ. しかしその度合いは異なる. -- このことはBayerの赤プレーン青プレーンとはまったく様相が異なる. 下層ピクセルの大部分の領域は, 各レイヤーに対する理想的なアンチ・エイリアシングフィルターだ; トップレイヤーは, 下層での余剰な空間ボケ(spatial blurring)による劣化を起こさない. そしてfull-res画像生成のために必要な更なる高周波数を提供する.

Another good way to think of the lower levels is that they get the same four samples as the top level, and then "aggregate" or "pool" four samples into one. This is easy to simulate by processing a full-res RGB image in Photoshop or whatever.

もう1つ下層についてうまい考え方がある. 各下層はトップレベルと同様に, 同じ4つのサンプルを得る. そしてその4つのサンプルを, 1つへと"まとめる"或いは"プールする". この考え方はPhotoshopででも何ででも, full-res RGB画像を加工することで簡単にシュミレーションできる.

The pooling of 4 into 1 is done most efficiently in the domain of collected photo-electrons, before converting to a voltage in the readout transistor. The result is the same read noise, but four times as much signal, so about a 4X better signal-to-noise ratio. Plus with fewer plugs, transistors, wires, etc. to service the lower levels, the pixel fill factor is closer to 100% with easier microlenses, and the readout rate doesn't have to be as high. Wins all around -- except for the chroma resolution.

4つを1つへプールする処理は, 読み出し(readout)トランジスタの中で電圧に変換される前に, 集められたphoto-electron ドメイン内でもっとも効率よく行われる. そこから得られる結果といえば, 同等の読み出しノイズ, 4倍の信号, 従って4x良いS/N比. 加えて, 下層を動かすためのプラグ, トランジスタ, ワイヤetcはより少なくて済み, pixelフィルファクター(曲線因子)は単純なマイクロレンズで100%に近い. そして読み出し率はそれほど高い必要がない. 良いことだらけだ. -- Chroma 解像度についてを除いては.

The main claim of Bryce Bayer, and the fact that most TV formats and image and video compression algorithms rely on, is that the visual system doesn't care nearly as much about chroma resolution as about luma resolution. Unfortunately, trying to exploit that factor with a one-layer mosaic sensor has these awkward aliasing problems. Doing it with the Foveon 1:1:4 arrangement works better, requiring no AA filter, no filtering compromises. So, yes, the chroma resolution is less than the luma resolution, but you'd be hard pressed to see that in images.

Bryce Bayerの主張, またほとんどのTVフォーマットや画像や動画が依存している事実は次のようなことだ. 視覚系はluma (輝度) 解像度と同じようには, Chroma 解像度に対し敏感でない. 不運なことに, 単層モザイクセンサーでこの事象を利用しようとすると, 上で書いたような深刻なエイリアシングの問題に突き当たる. しかしFoveon 1:1:4 配置では, よりましな結果になり, AAフィルタを必要とせずフィルタによる劣化は起こらない. だから回答としてはyes, Chroma 解像度は, luma 解像度より低い. しかしそれを実際の画像で見分けるのは非常に難しいだろう.

If you throw out the extra luma resolution and just make 5 MP images from this new camera, you'll still have super-sharp super-clean versions of what the old DP2 or SD15 could do. Now imagine adding 2X resolution in each dimension, but with extra luma detail only, like in a typical JPEG encoding that encodes chroma at half the sample rate of luma. Whose eyes are going to be good enough to even tell that the chroma is less sharp than the luma? It's not impossible, but hard.

仮に増分のluma 解像度を放り捨て, この新しいカメラから5MP画像を単純に生成したとしたら, それでも旧DP2やSD15で生成できた画像の, スーパーシャープ, スーパークリーンなバージョンとなるだろう. 2倍の解像度を各次元に加えたと想像してみよう. しかしここでは新たに増えた分の luma ディテールのみを加えるのだ. これはまるでchroma を luma のサンプルレートの半分でエンコードする典型的なJPEGエンコーディングのようだ. その画像をみてchroma が luma と比べてシャープじゃないなどといったい誰が見分けられるのか?不可能とは言わない, がしかし難しい.

Speaking of stories from the old days, Foveon's first version of Sigma Photo Pro had a minor bug in the JPEG output, as you probably recall: our calls to the jpeg-6b library defaulted to encoding with half-res chroma. It took a while, but a user did eventually find an image where he could tell something was not perfect, by comparing to TIFF output, and another user told us how to fix it, so we did. It we could have gotten that original level of JPEG quality from the SD9 with 5 million instead of 10 million pixel sensors and data values, and could have gotten cleaner color as a result, would that have been a problem? I don't think so; except for marketing, and they had enough problems already. Same way with Sigma's new one, I expect; if 30 M values gives an image that will be virtually indistinguishable from what could be done with 60 M, but with cleaner color, will someone complain?

昔話をしよう. 覚えている人もいると思うが, FoveonのSigma Photo Pro最初バージョンには, JPEG 出力にマイナーなバグがあった: jpeg-6bライブラリへの呼び出しは, 50% のchroma 解像度でのエンコードをデフォルトにしてあったのだ. 時間はかかったがともかくTIFFからの出力と比較すると何かが欠けていると, それが分かるある一人のユーザが現われた. そしてまた別のユーザがそれについての対処法を僕ら伝えてきた. そうして僕らは修正できたのだった. SD9のもともとのJPEG品質レベルの画像を, 僕らは10M pixelセンサーの代わりに5Mセンサーからのデータで得ることが出来たのかもしれない. そしてそれは結果的によりクリーンな色情報を持つものだったのかも. それが何か問題になっただろうか?僕はそう思わない. マーケティングについては例外. でもマーケに関していえば, もうすでに十分な課題が山積していたからね. SIGMAのこの新しいカメラにも同じことが言えると思う; もし30M分の情報で, 60Mで得られるものとほとんど区別のつかない画像を生成できるとしたら, それで誰かが文句を言うのかな?

Probably so.

うん, まあ言うのかもしれない.

So, it's complicated. Yes, reduced chroma resolution is a compromise; but a very good one, well matched to human perception -- not at all like the aliasing-versus-resolution compromise that the mosaic-with-AA-filter approach has to face.

だから事態は複雑だ. その通り, Chroma 解像度を減らすことは, 1つの妥協だ. しかしとても筋のよい妥協で, 人間の知覚とよくマッチしている. -- これはモザイクとAAフィルターアプローチが面と向かうはめになる "エイリアシング-vs-解像度" による画質劣化といった事柄とは, まったく異質のものだ.

Dick

--- (2) POST ココマデ

(3) http://www.dpreview.com/forums/post/53107523

Truman, I guess you missed the bit where I wrote "A lot of people seem to have the idea that aliasing has something to do with different sampling positions or density, as in Bayer. But that's not the key issue. ..." It must have been your comments that prompted that. What is "sub-alias sampling" supposed to mean?

Truman, 君は僕が書いた "多くの人がベイヤーでそうであるように, エイリアシングが異なるサンプリング位置か密度となにか関係があると考えているようだが, それは大きな問題ではない..." という部分を見逃したのだと思う. これはこのことを示した君自身のコメントであるべきものだった. この"サブ-エイリアス サンプリング (sub-alias sampling)"とは具体的になにを意味するのだろう?

Sure, there will be aliasing, as in all sampled images. If you consider the pixel pitch and pixel aperture, you can work out that the aliasing will be about like that of the SD15's sensor. That is, negligible; and that will only be in chroma, so even more negligible, visually. The key next step is adding a high-frequency luminance signal, uncorrupted by that aliasing. That's what's nearly impossible to get accurately in Bayer sensors, but trivial to get with the Foveon 1:1:4.

そりゃそうさ, エイリアシングはある. サンプルされた画像全てにある. もし君がピクセルピッチとピクセル径を考慮しているのなら, エイリアシングはSD15のセンサーのそれとおなじようなものだと理解することができるだろう. つまり, 無視できる; そしてそれはchroma に対してのみで, すると視覚的に更に無視できる. 次のキー・ステップは, 高周波数の luminance 信号を加えることだ. これにはエイリアシングによる欠損がない. ここでベイヤーセンサーに正確性を求めることはほとんど不可能だ. しかしFoveon 1:1:4では簡単に得ることが出来る.

But you are certainly right, as I also said, that we will wait and see. My words and yours are not going to convince anybody of anything until they see it.

とは言え君はまったくもって正しい. また僕もそう言っているように, 待って見ようじゃないか. 実際に皆が実物を見るまで, 僕や君の言葉は誰も説得できやしないだろう.

As I also said elsewhere, I'm both surprised and delighted, after 8 years away from Foveon, to see this concept making it into a Sigma camera. It shows that Kazuto had the courage to focus on image quality above all, not fearing the marketing difficulty of explaining the Foveon advantage a little differently and with lower pixel numbers than before. As Laurence said, Merrill worked on this idea from its inception; Foveon's cell-phone sensor project did enough testing to show it's a great idea. And I presume the rest of the Foveon team has continued to execute well on the potential in bringing it to the larger format. This may be Merrill's best legacy, even more than the ones named for him.

前のポストでも言ったけど, Foveonを去ってから8年の後, このコンセプトがSIGMAのカメラに搭載されたことを目にしたことは, 僕にとっては驚きと喜び両方だ. 分かることは, Kazutoが何にもましてイメージ・クオリティにフォーカスする勇気を持ってたこと. 前よりやや少ないピクセル数になりFoveonのアドバンテージについて今までとは少し違う説明をしなければなならい, マーケティング的な難しさを厭わなかったこと, そういったことだ. Laurenceが言ったように, Merrillは当初からこのアイデアを実現しようとしていた; Foveonの携帯電話センサープロジェクトはこれが素晴らしいアイデアだと示すのに十分なテストはしていた. 後に残ったFoveonチームは, このアイデアをより大きなフォーマットへ適用できる将来性を見込んで, 首尾よく開発を継続し続けてきたのだと僕は推測するよ. これはMerrillの最も素晴らしい遺産と言えるかもしれない. 彼の名に由来するどんなこと以上にさ.

If I may summarize once more what I think is the key point: the tradeoffs and compromises about how to utilize the Foveon concept and technology have been thought through here, and developed and tested for many years, in a way designed to maximize image quality at both low and high ISO. The 1:1:4 architecture is surprising and non-intuitive, but it is what best exploits what the silicon can do (as Carver said, years before we started Foveon, "listen to the silicon"http://www.wired.com/wired/archive/2.03/mead.html). Listening is not a theoretical exercise, nor something you do in computer models (though they help).

ここでもう一度僕の考えるキーポイントをまとめるよ: Foveonのコンセプトとテクノロジーをどのように活用するのか, そこに生じるトレードオフと妥協の数々については, 低ISO, 高ISO両方で画質を最大限良くするデザインにする, という方向性で考え抜かれ, 時をかけてかけて開発・テストがなされてきた. 1:1:4構造は衝撃的でかつ直感的ではない. しかしこれはシリコンが出来ることを最も巧みに利用したものだ(僕たちがFoveonをはじめる何年も前にCraverが言ったように, "シリコンの言うことに耳を傾けよ"http://www.wired.com/wired/archive/2.03/mead.html). 耳を傾けるということは, 単に理論的考察を行うことではなく, またコンピュータ・モデルの中で何かゴニョゴニョするということでもない (もちろん助けにはなるけれども).

Dick

--- (3) POST ココマデ

2014年4月3日木曜日

2014年4月2日水曜日

"Introduction to Quattro Sensor" - The lecture by K. Yamaki CEO in CP+ February 2014, Yokohama Japan

A few cautions: 1) Texts are not word-by-word transcription and translation. Words and sentences were added or removed to make its meaning clearer by the blog poster (me). As a result, it is possible that some parts might be lost accuracy. The only trustable source is the video itself; 2) I'm not native English speaker, thus I'm certain that there are mistakes in my English so you need to be tolerant.

Translator's note is marked as [TN].

[Page-1]

- Because Quattro sensor structure is non-intuitive, I saw some rumors something like it is altered from the classical Foveon X3, or it's rather Bayer-tic, but these are all untrue.

- Foveon Quattro sensor is the truly successor of Foveon X3 technology with no doubt, this is the message I want to tell today.

- This Lecture consists of three sections.

- 1. What is “Foveon X3 Technology”? The basics of the Foveon sensor is explained.

- 2. What is Quattro sensor different from, and the same with, its predecessors?

- 3. Introduction to the new camera

|

| page-1 |

- Introduction to the Foveon Quattro Sensor

[Page-2]

- Foveon company logo

|

| page-2 |

[Page-3]

- Foveon is 100% held subsidiary company of SIGMA.

- It designed and developed all sensors equipped in the camera from SIGMA.

- It's fab-less, no factory.

- It takes responsibility for design development of sensors.

- It pioneered the world's first three layered full color sensor, and still now leading the market.

|

| page-3 |

- It is totally-held subsidiary of SIGMA

- It developed the world's first image sensor what directly captures color using three layers of pixels. “Foveon” is taken as synonymous with the “three layer full color sensor”.

[Page-4]

- The image sensor structure which is used by ordinary digital camera other than SIGMA's is something like this (page-4).

- It is monochrome sensor which can capture only contrast.

- RGB color filters are overlay-ed on the light-sensitive photodiodes, and that makes them capture single color on each pixel.

- To create final image, camera do so called color interpolation on the original mosaic data.

- In late 1990's, the early days of digital camera history, there were many people who insist that image quality of digital camera was no-good, there was no way to take over film camera.

- One of the reasons of such negative feedback was coming from the typical CFA sensor structure, i.e. it can only capture 1/3 light. It was often said that it would be impossible to compete with film's rich image quality, as long as the sensor is CFA structure,

- [TN] You can see the almost identical description in http://www.sigma-sd.com/SD1/system.html.

|

| page-4 |

- Ordinary CFA (Color Filter Alley) sensor structure

[Page-5]

- Foveon sensor takes vertical three layered structure (page-5).

- You might see some resemblance to how a film captures the light.

- This “three layered structure” was just a kind of fantasy for digital camera in its early days.

|

| page-5 |

- Foveon X3 Sensor

[Page-6]

- This (page-6) is the evidence of such “fantasy”.

- dprereview, 2000 4.1 “Leak! Canon developing multi-layer CCD”. As you can see, this was “April-Fool” joke article by Phil Asky.

- [TN]: URL of this page: http://www.dpreview.com/news/2000/4/1/canonccd

|

| page-6 |

[Page-7]

- “Fooled you?” on April 2nd. And Phil wrote:

- “It's a completely fictitious...”

- Also said, “although I'm sure it's beyond practical manufacture. (Manufacturers who think it's viable... email me ;)”

- At this time, Foveon had already been developing three layer image sensor, so a marketing manager at the time actually emailed to Phil and he was incredibly surprised. After having NDA, what Foveon was doing was disclosed for him.

- As this episode can tell, “three layered sensor” was still just a fairy tale at the time.

- [TN]: URL of this page: http://www.dpreview.com/news/2000/04/02/fooled

|

| page-7 |

[Page-8]

- Where the company Foveon resides.

|

| page-8 |

- Foveon company location

[Page-9]

- Santa Clara, California

- The office is right in front of Santa Clara University campus.

|

| page-9 |

[Page-10]

- The origin of the company name “Foveon”

|

| page-10 |

- The origin of the company name “Foveon”

[Page-11]

- Cutaway of the human eye.(page-11)

- The center of retina is called “Fovea Centralis”. That part can capture the finest details with the highest resolution.

- There are two types of visual cell. One is cone which is capable capturing fine details of objects, another is rod which works well in the darkness but has no color information.

- Fovea Centralis is the place which has the highest density of corn (can capture high-resolution image).

- In fact, Foveon stands by the principles that it makes the world's highest resolution and image sensor and camera, like Fovia Centralis in human eye. That is the mission of the company since day-one, and this is the point of today's lecture.

- The company name was originally “Foveonics”. They wanted to be a camera maker from the first point, and look around the industry, major player's name is like Nik”on”, Can”on”, and Tamr”on” etc, so removing -ics, then it became Foveon which sounds much like camera maker.

|

| page-11 |

[Page-12]

- Three layered structure wasn't their initial idea.

|

| page-12 |

- Background story of Foveon X3 sensor development

[Page-13]

- This (page-13) is the first camera they produced. It was called “Prism camera”.

- The camera was actually a laptop PC with Canon Lens.

- They insisted that it had the highest resolution in the world at the time.

- It was very expensive though there is no record anymore, but it was around 2M - 2.5M JPY if I remember correctly.

|

| page-13 |

[Page-14]

- How “Prism” came in?

- Do NOT use color filter because it can capture only 1/3 light on each cell. Capture whole light, and also avoid color interpolation afterwords.

- First disperse incoming light by prism, second receive each RGB light on three CCD dedicated for each color without any “filtering”, third generate the final image.

- Technically it didn't work at all.

- They also did assembly in Silicon Valley but no practical yield rate. It did not make good business sense.

- The company was in crisis.

|

| page-14 |

[Page-15]

- While the company was struggling, Dick Merrill filed patents about three layered image sensor.

- Silicon has a property that it absorbs shorter wavelength light on closer part of the surface.

- Based on that property, the patent about capturing light by three layered silicon was filed.

- He was used to be a top engineer of national semiconductor, and very popular in silicon valley.

- He was always crabbed-looking guy, and famously called “mad scientist” in the company.

- He had a great personality. He always welcomed me (Yamaki-san) when I visited silicon valley, and told me a new Japanese noodle restaurant around there, and said “it must be nice because I saw many Japanese in it!”.

- He was very kind, and very fantastic person really.

- Often he was into waring shorts and T-shirt, and walking around the office. Of course he was very well known in the company but one day, a new employee mistakenly recognized Dick as an office cleaner, and asked him to empty a wastebasket. Dick obeyed him without argument. People who saw it very upset, told that new employee who the “office cleaner” was, and he turned pale. Dick had also a good sense of humor really.

- Dick Merrill died of cancer in 2009, it was very sad.

- He had strong will to make top performance camera with SIGMA.

- Current Merrill product series was named to show respect for the achievements of Dick Merrill.

- Let me tell you one more episode of Dick Merrill. As you may know, the factory of SIGMA is located in AIZU, Fukushima Japan. The products of SIGMA are still “MADE IN JAPAN”. Right after beginning of joint-project with SIGMA, Dick visited Japan with his wife.

- He rented a car at Narita airport, and traveled straight to Aizu Wakamatsu city. At the time he knew the project with SIGMA but didn't know where its factory resided. Well, typical places to go would be Tokyo or Kyoto, don't you think? But according to Dick, he was greatly attracted to Aizu after some research, so went straight to there from the airport.

- Then he returned to Tokyo, and had meeting with Kodak digital camera people. It was the era like right after release of Nikon D1.

- In that meeting, he lectured what was the limitation of digital cameras (of the day), and how could it be better, by using digital photo images taken in that Aizu Travel, like Tsurugajo-castle or Inawashiro-lake.

- In that (Kodak) meeting, there was a person who knew the joint-project with SIGMA and he visited Aizu factory beforehand. He obviously recognized the Aizu photos, and told Dick that Aizu was the place where the factory of SIGMA resides. So Dick and all participants in that meeting were very surprised and felt the magic.

- Although Dick Merrill filed three layered silicon patent, but seems he did not believe its feasibility of the production.

|

| page-15 |

[Page-16]

- Dick Lyon showed the way to substantiate the three layered sensor, then got budget and gave green light to the project.

- [TN]: Dick Lyon posted several messages on dpreview about Quattro sensor's technical aspects. (http://www.dpreview.com/members/663100715/overview)

|

| page-16 |

[Page-17]

- The project delivered SD9.

|

| page-17 |

[Page-18]

- SD9 was the most wicked camera ever existed.

|

| page-18 |

[Page-19]

- SD9 Properties (page-19)

- It carried the world's fist three layered Foveon X3 sensor.

- Outstanding resolution at the time.

- Low-pass filter-less. SIGMA strongly appealed benefit of low-pass filter-less system for taking good picture, but faced difficulties to convince reviewers of camera magazines at the time.

- Nowadays various low-pass filter-less cameras do exist in the market because its advantage is recognized well, but look, SIGMA has been insisting it for twelve years.

- It was Raw only camera, i.e. no JPG, that means you have to digital raw processing to retrieve any image, and it was seriously criticized at that time. Again nowadays, Adobe LightRoom, Aperture, and SilkyPics etc does exist in the market and there is sufficient awareness for the advantages of RAW processing, but SD9 was quite under fire and said, who wants such no-JPG camera at all. Well yes, SD9 sales figure was small, that's true. SD9 was truly wicked camera, you would agree.

|

| page-19 |

- SD9 equipped the word's first three layered Foveon X3 sensor

- Ultra-high resolution at that time

- Low-path filter-less

- RAW only

[Page-20]

- Topic is gonna be little technical from here.

- It might possible to say that Foveon sensor is "vertically allocated" version of the prism camera (see page-14).

- CFA is abbreviation of Color Filter Array (sensor). The resolvability of Foveon sensor is, CFA sensor equivalent, 6.8M pixel.

- The calculation is pixel location (pixel count) 3.4M multiplied by two equals 6.8M. Please keep in mind this “pixel location multiplied by two” logic.

|

| page-20 |

- Pixel count: 3x 3.4M Pixel = 10.2M pixel

- Resolvability: CFA sensor equivalent 6.8M pixel

[Page-21]

- 2007 SD14 was shipped to the market with a new sensor.

|

| page-21 |

- Pixel count: 3x 4.6M pixel = 14M pixel

- Resolvability: CFA sensor equivalent 9.2M pixel

[Page-22]

- 2011 SD1 hit the market.

|

| page-22 |

- Pixel count: 3x 15M pixel = 46M pixel

- Resolvability: CFA sensor equivalent 30M pixel

[Page-23]

- X-Axis is year.

- Y-Axis is CFA sensor equivalent resolvability.

- 2002 SD9 6.8M pixel

- 2007 SD14 9.2M pixel

- 2011 SD1 Merrill 30M pixel. A great leap.

- 2014 dp2 Quattro 39M (CFA equiv.) resolvability.

- I heard rumors something like Quattro doesn't look like Foveon, or became more Bayer sensor like product, but those are all wrong completely. Quattro is true genuine successor of the Foveon X3 Technology.

- Also by this slide (page-23) I wanted to show Quattro's resolvability is improved.

|

| page-23 |

- SD1 Merrill (CFA sensor equiv. 30MP)

- dp2 Quattro (CFA sensor equiv. 39MP)

[Page-24]

- Specifications of Quattro sensor

- On Quattro, We call that “three layers” as Top-Middle-Bottom, rather than Blue-Green-Red.

- Top layer 19.6M pixel, Middle layer 4.9M pixel, Bottom 4.9M pixel, total 29.4 M pixel.

- CFA sensor equiv. resolvability is gonna be 39M pixel around.

- This logic might not be easy to understand.

- Foveon ex-Quattro sensor has “lesser” resolvability (CFA equiv.) than total pixel number. (e.g. SD1M 46M pixel count, 30M resolvability)

- Quattro sensor got higher resolvability than total pixel counts. (i.e. 29.4M pixel counts, 39M resolvability). This fact could make old SIGMA users little confused. Let me explain how that can be done.

|

| page-24 |

- Specification of the Quattro sensor

- Total pixel count: 29.4M pixel

- Top layer: 1.96M pixel

- Middle layer: 49M pixel

- Bottom layer: 49M pixel

- Resolvability: CFA sensor equiv. 39M pixel

[Page-25]

- Let me tell you the reason why Foveon sensor has higher resolution than CFA sensors.

- Then how Quattro sensor can achieve (CFA sensor equiv.) 39M pixel resolvability by 19.6M (nearly equal 20M) pixel count.

|

| page-25 |

- Why is the resolvability of Foveon sensor superior?

[Page-26]

- On an ordinary CFA sensor, color filters overlay photodiodes thus it captures 1/3 light (R or B or G, only one of them) on each pixel.

- Foveon X3 captures RGB full color information by three layer silicon.

|

| page-26 |

- CFA Sensor

- Each pixel can capture 1/3 light only

- Color Filter

- Each pixel captures RGB full color information

[Page-27]

- Foveon X3 captures RGB full (100%) color information, i.e. R100%, G100% and B100%.

- CFA sensor's color filter allocation pattern takes G50%, R25% and R25%.

- This R:G:B 1:2:1 ratio is the excellence of the Bayer-Pattern.

- [TN] Refer http://www.sigma-sd.com/SD1/system.html

|

| page-27 |

- CFA Sensor

- Blue 25%, Green 50% ,Red 25%

- Every pixel captures RGB, thus Blue 100%, Green 100%, Red 100%

[Page-28]

- Why Bayer-Pattern allocates “more Green” than Red and Blue?

- One of human eye's attributes is that it has the highest resolution for middle range wavelength, i.e. mostly green color.

- (page-28) is the human eye's sensitivity graph.

- X-axis is wavelength. Left, shorter wavelength to right longer.

|

| page-28 |

- Sensitivity of human's eye suggests that green can be captured most sensitively, then red, and lastly blue.

[Page-29]

- Let's have a quick test.

- (page-29) The contrast of those four charts is all the same.

- But in our eyes, Green one shows the most finest information, then Red, and lastly Blue.

- The way that human eye captures contrast is, the most green sensitive.

- Human vision can capture more details on Green than the other, and this is the reason why Bayer pattern takes more green, 50%, then red and green 25% each.

|

| page-29 |

[Page-30]

- Bayer pattern excellence is that it allocates more resolution for Green (i.e. more pixels) by simulating Human vision.

- (page-30 right) Green cell on Bayer pattern is allocated alternately on both X and Y axis.

- (page-30 left) Green cells on Foveon fulfills whole plane.

- As a way of saying, the sampling frequency of Bayer sensor is half of Foveon sensor.

- This is the reason why SIGMA insists that Foveon sensor has twofold resolution of CFA sensor based on the same pixel count.

|

| page-30 |

- Foveon X3 sensor has twice as much as resolution CFA sensor does on the same pixel count.

[Page-31]

- If Foveon sensor has 10MP, it has 20MP resolvability, CFA sensor equivalent.

- Quattro sensor has 20MP top layer, thus it shows CFA equiv. 40MP.

- This is the justification of “39M pixel CFA equiv. resolvability” i.e. Quattro sensor has 19.6M pixels (about 20M pixels) on the top-layer, thus it is capable of CFA equiv. 39M (about 40M) resolvability.

|

| page-31 |

[Page-32]

- Let's move on to the clarification of Quattro sensor.

|

| page-32 |

[Page-33]

- (page-33) This is the actual image of Quattro sensor.

|

| page-33 |

[Page-34]

- The attributes of Quattro sensor

- Three layered structure as it was on its predecessors.

- RGB takes 1:1:4 pixel location. This structure is the greatest innovation since the delivery of the first generation Foveon sensor.

- APS-C size

- 30% around higher resolution than Merrill sensor (CFA sensor equiv.)

|

| page-34 |

- Attributes of Quattro Sensor

- Three layered sensor as ever

- Innovative 1:1:4 structure

- APC sized sensor

- 33% higher resolution than Merrill sensor (CFA sensor equiv.)

[Page-35]

- The structure of Quattro sensor

- It captures B G R full color information.

- Top layer captures luminance (or detail) information and blue color information.

- Top layer has two roles.

- Middle layer captures green color information, and bottom layer captures red color information.

|

| page-35 |

- The Structure of Quattro sensor

[Page-36]

- (page-36) Recap of page-24.

- Detail (luminance) information is captured by the top layer 20M pixel, and this is the reason why Quattro has 39M pixel (around 40M) CFA equiv. resolvability.

|

| page-36 |

- Specification of the Quattro sensor

- Total pixel count: 29.4M pixel

- Top layer: 19.6M pixel

- Middle layer: 4.9M pixel

- Bottom layer: 4.9M pixel

- Resolvability: CFA sensor equiv. 39M pixel

[Page-37]

- Why on earth Quattro takes 1:1:4 structure? What's wrong with the Foveon conventional 1:1:1?

- Sizable increase of data size; 3x 20M pixel 1:1:1 structure can generate amount of 60M pixel data. This is enormous. Quattoro's 1:1:4 structure can make smaller data size, around 30M pixel amount of data.

- Time consuming processing. The smaller data size it generates, lesser processing time it takes.

- With smaller file size, and brand new, more powerful TRUE-III processor, dp2 Quattro needs only 5 seconds around on a “normal” RAW image (though image size depends on the subject).

- DP2 Merrill takes around 12 seconds, thus Quattro is (more than) 2x faster.

- Power consumption rate is also improved. With bigger battery, Quattro is capable of taking around 200 shots with a fully charged battery cell, though it's still under development thus this figure is not conclusive yet.

- S/N ratio got improvement by lager size photodiodes on the middle and bottom layers. It shows slightly better performance in low light.

- A user should be able to realize 1-stop improvement on average, tough it's depends on shooting conditions.

- It's not secret that Foveon is not good at high ISO, it got a lot of noise as compared with other cameras. That is something I have to admit.

- S/N ratio has been improved yes, but it does not directly mean Quattro can perform better with higher ISO but it must show stable performance on ISO 100, 200 and 400. Particularly on ISO 100 and 200, Quattro's “image quality” will superior to any other cameras in the world.

- The base ISO of Quattro sensor is 100, while Merrill's is 200. Merrill's ISO 100 shows little bit low-tolerance for over exposure but with Quattro, you can use ISO 100 without any concern.

|

| page-37 |

- Quattro sensor do tackle:-

- Getting bigger data size

- Time consuming image processing

- Large power consumption rate

- Having better signal characteristic

[Page-38]

- Even though Quattro sensor got radical 1:1:4 structure, there are very important characteristics which must be retained.

- That is, first of all, Feveon's famous textural expression.

- Images taken by SIGMA cameras are treasured for its rich tone and gradation and texture.

- Displayed or printed, the rich textural expression of rocks, rusty or shiny chromes, water surface, ice and so on so forth, is excellent as if the object does exist in front of you. That is the typical admiration for images taken by the SIGMA camera series. It is called “Foveon Look” in the company.

- Simply speaking, images fulfilled with realism, that is one of the important properties of Foveon sensor.

|

| page-38 |

- Quattro sensor retains:-

- Texture expression

- Foveon Look = Realistic texture expression

[Page-39]

- (page-39 1) Why Foveon sensor can generate texture rich images?

- (page-39 2) Above all things, micro detail (resolvability) is the most important.

- Since image sensors got more than around 10M pixels, there has been criticism for just increasing pixel number as mere spec battle of camera venders. Someone said 8M pixels should be enough, more than that, data size gonna be too big without convincing benefit.

- It is true that big image data is difficult to handle, but if we are talking about specifically IMAGE QUALITY, higher resolution is absolute justice.

- More detail information with higher resolution ALWYAS fulfills an image with realism.

- It can provide steadfast image quality as a photograph.

- It will NEVER be possible to do for lower resolution cameras.

- Because Foveon sensor has higher resolution, it can create images which has more realism.

- SIGMA has a dream even though it is very distant one. SIGMA wants to make a camera which allows us to take a photo as if it was taken with 8x10 format, but no need to carry huge 8x10 camera at all.

- (page-39 3) Photo target-independent, steady picturesque capability for details.

- Foveon sensor shows target-independent, steady picturesque capability for details.

- RGB sampling rate of ordinary camera is 1:2:1, that means sampling frequency is different from colors and it can cause inconsistent resolvability depending on photo-taget color configuration, though it can be visible only when it is magnified enough.

- SIGMA strongly believes that whether micro fine details are described faithfully or not will decide that the final image can have true realism, or not.

- So, one of important Foveon properties is target-independent, steady picturesque capability for details.

|

| page-39 |

- Why can Foveon sensor output rich-textured images?

- Micro-Detail (resolvability)

- Photo target-independent, steady picturesque capability for details

[Page-40]

- (page-40) Images which ware taken by dp2 Quattro.

- These might not be very beautiful photos, but intended to show that how dp2 Quattro captures fine details.

|

| page-40 |

[Page-41]

- (page-41) 100% Cropped

|

| page-41 |

[Page-42]

- Though just showing images on the display might not be obvious, but it's photographed..

|

| page-42 |

[Page-43]

- as if it does exist in front of you..

|

| page-43 |

[Page-44]

- and truly texture rich expression you would be able to see..

|

| page-44 |

[Page-45]

- also you might be able to see the meaning of “photo target-independent, steady picturesque capability for details” here.

|

| page-45 |

[Page-46]

- (page-46) right hand side photo was taken by dp2 Quattro.

- (page-46) left photo was taken by an ordinary 20M pixel digital camera

- SIGMA camera often got reviews like, “its pixel location gets only 20M pixels, therefore this is exactly 20M pixel camera, isn't it?”

- Even if we insist it has far more than 20M pixel resolvability but in many cases people tend to say definitively it is 20M pixel camera.

|

| page-46 |

[Page-47]

- (page-47) magnified images.

- You should see that right (Quattro) image has more detail, more three-dimensional appearance than left one, can you see that? though it will depend on the monitor's resolution.

|

| page-47 |

[Page-48]

- From here (page-48), topic is going to be somewhat technical, targeted for people who are already familiar with Foveon sensor.

- Why detail information can be captured on the top layer?

- Talking about Bayer-pattern (refer page-27 and 28), the reason why Green is given more resolution than the other was explained. If that is true, why Quattro RGB 1:1:4 wasn't 1:4:1 (giving higher resolution to Green layer)?

|

| page-48 |

- Why detail information can be captured on the top layer?

[Page-49]

- My apologies.

- (page-49) This is the sensitivity graph of the Foveon sensor.

- These characteristics of the Top, Middle and Bottom layers got absolutely no changes since day-1 of Foveon sensor development.

- Each layer was referred as blue, green and red but precisely speaking, it was not pure blue, green and red. Each layer has wider sensitivity for the spectrum.

- “blue layer” was not pure blue for instance, so we want to stop saying “RGB” layers anymore and correct it to “Top, Middle, Bottom”.

- The fact that “each layer has wider sensitivity (not pure R/G/B)” is very important point to understand Quattro.

- They are somewhat panchromatic, and each layer absorbs light from the entire spectrum. Thus top layer, for instance, captures mostly blue light but also catches green and red light at some extent.

- This is the reason why the top layer can be the source of necessary chroma information, resolution and detail information.

- Why it should be “top” layer, rather than middle for instance? Because top layer performs on the best S/N ratio. Thus we capture the most detail information on the cleanest location (i.e. top layer) to have the best image quality.

|

| page-49 |

[Page-50]

- Quattro chroma resolution doesn't get degradation.

- But it might not be obvious why it doesn't.

- Someone might say, like “1:1:4 means green and red has only 5M pixel each, it's not comparable to the top layer. 1:1:4 must cause chroma resolution degradation”.

- On Quattro, R/G/B are not sampled evenly unlike classic Foveon sensor (and this RGB 1:1:1 sampling was its signature) but no chroma resolution degradation.

|

| page-50 |

- Why Quattro color resolution isn't degraded?

[Page-51]

- (page-51) it shows how Quattro sensor captures the light.

- (page-51 left column) Collecting chroma and blue information on top layer, green on the middle and red on the bottom layer.

- (page-51 center column) TRUE III does process both spacial data (detail information) collected on the top layer and spectral data (color information) on each layer.

- (page-51 right column) In the end, generating 4:4:4 quality (full color resolution) image.

- I meant to say, it is 1:1:4 structure yes, but the generated image certainly preserves 4:4:4 full color resolution.

- Top layer's spacial (detail) information can be applied vertically straight to each layer, and those information actually does exist right there (no color interpolation or whatever).

- Color consists of hue, saturation and value and those information do exist there thus TRUE III image processing can make “true” information by 4:4:4 without color interpolation type of thing.

- Analogically speaking, 1:1:4 data is a state which is a result of lossless compression to the 4:4:4 data originally.

- That lossless compressed data is decompressed by TRUE III, then getting 4:4:4 full color resolution image data.

- In reality, the story is not that simple, but it can be explained in that way as an analogy.

- [TN]: This article from Luminance Landscape about Las Vegas Trade Show 2014 provides English version of this slide. Find "Sigma" section in that long page.

|

| page-51 |

- Quattro sensor

- Image quality

- Decompression

- 4:4:4 resolution

- 1:1:4 structure has rough synonym for that middle and bottom layers get loss-less compression

[Page-52]

- (page-52) it illustrates DP2 Merrill and dp2 Quattro resolution.

- We can see both cameras are capturing very fine detail.

- DP2 Merrill is 3150 LW/PH (light widths/picture height)

- dp2 Quattro is 3650 LW/PH.

- You can see it brings very high resolution to reality, and shows clear detail. And this fact proofs that Quattro is working on Foveon X3 4:4:4 data quality.

- Also this is a proof of that Quattro get more resolution than Merrill.

|

| page-52 |

[Page-53]

- Still someone would have concern about degradation of color resolution because of 1:1:4 structure.

- (page-53) Let me show you one more evidence for that there is no such degradation.

- (page-53 left hand side, vertical line band) This photo was taken by 36M pixel Bayer sensor.

- Resolution is almost equivalent but you should be able to see the unnegligible difference regarding how the image details are displayed.

- This is because Bayer sensor captures RGB with 1:2:1 ratio, which means that sampling rates of each color is different. This causes color blur.

- Also pixel location and color locations are not the same unlike Foveon and this is the reason of false-color issue.

- (page-53 right vertical line band) Quattro is free from such issues.

- If Quattro sensor got degradation with 1:1:4, false-color issue would be observed here. But shown image tells that there is no false-color issue, that means, the generated final image data has 4:4:4 image quality. This is the proof of that Quattro is decent successor of Foveon X3 technology.

- So please set your heart at ease because there is no way for Quattro to have Bayer sensor related issues.

- Innovative Quattro 1:1:4 structure makes us possible to get lighter data size, increase pixel counts and capture more detail information. This breakthrough technology can bring us to dream medium format, or even 8x10 with no real road blockers, though it's still very long way to go.

- [TN]: Interview article of imaging-resouce provides clearer image.

|

| page-53 |

- R:G:B resolutions are all equivalent (4:4:4) on Quattro sensor

- Quattro has NO false-color issue because chroma resolution and color resolution are on a par.

[Page-54]

- Lens is very important as much as sensor is.

- These days digital camera sensor keeps increasing pixel counts.

- But it's meaningless if lens can have enough resolution.

|

| page-54 |

- Lens

[Page-55]

- Lens is very important as much as sensor is.

- dp2 Quattro has exactly the same lens with Merrill's because DP2 Merrill lens has enough resolution.

- I'm confident that DP2 Merrill lens is one of the products available today which have the highest resolution.

- DP3 lens is even more than that.

- DP2M and DP3M lens are used for dp2Q and dp3Q without change.

- Only dp1 Quattro will have new lens constitution.

- Lens and sensor, both resolution needs to match up to take a good photography. Talking about lens, SIGMA also has been focusing on interchangeable lens product line and receiving good reputation. We understand there is an opinion that sharpness of SIGMA lens is overkill in some cases, it doesn't have to be so sharp. But if a camera got high resolution sensor, lens also has to be high resolution capable, this is not questionable. So if it is said to be too much, SIGMA pursues releasing high resolution products, even though lens optics is dominated by classical physics therefore there is no overnight innovation. Stay tuned.

|

| page-55 |

- Sensor and lens have the same importance for image quality.

[Page-56]

- TRUE III has been developed for Quattro.

- dp2 Quattro is chock-full of fleshy developed technologies.

- TRUE III is the image processing engine which has been developed for supporting Quattro sensor.

|

| page-56 |

[Page-57]

- Lastly, let me introduce the new camera.

|

| page-57 |

[Page-58]

- dp2 Quattro has been somewhat receiving reputation that it's very odd-shaped camera.

- I was deeply in shock when I saw a thread which was titled “Ugly Camera” something.

- The higher resolution a camera provides, The more visible a fraction of camera shake or out-of-foucs are.

- That is even more obvious when you use more than 30M pixel camera. As a result, you can only get smaller chance to shoot decent photos.

- Firmly grip the camera by right hand and hold the lens by left hand, then make stable shooting. dp2 Quattro is suggesting such shooting style primarily by its grip design and larger lens barrel.

- Now I (Yamaki-san) am testing the prototype on the weekends. So I can tell you that the more I use it, fit comfortably in my hand. Now feel sooo good. I would agree that you can have sense of discomfort at the beginning, but please give Quattro some more time. I'm confident that it will satisfy you after two or three days. Then you will happily tweet it!

|

| page-58 |

[Page-59]

- Quattro is devoted to pure beautility and there is absolutely no unnecessary frills on it.

- Yes it's bigger than Merrill.

- Quattro's image processing backend is comparable to the system equipped in an ultra high spec professional camera from some major vendor (well “C”'s 1D-something).

- Four analog front end chips are installed for sensor data readout.

- Then two DDR3 2Gbit buffer memory.

- And an image pre-process chip, then TRUE III for post-process with two 2Gbit buffer memory again.

- It is certainly comparable circuit scale to a high-spec professional camera and even more highly integrated into the small body.

- It's not a design for design. It's incarnation of functionalism, you might say.

- You would think that's big, and even tweet “whoa it's huge” or something, and that's OK. But you, the one who attended this lecture, might also tweet about Quattro's amazing technology in such compact body, that even more appreciated. :)

- I promise to retweet it as soon as I find such post. :) :)

|

| page-59 |

[Page-60]

- Side view

|

| page-60 |

[Page-61]

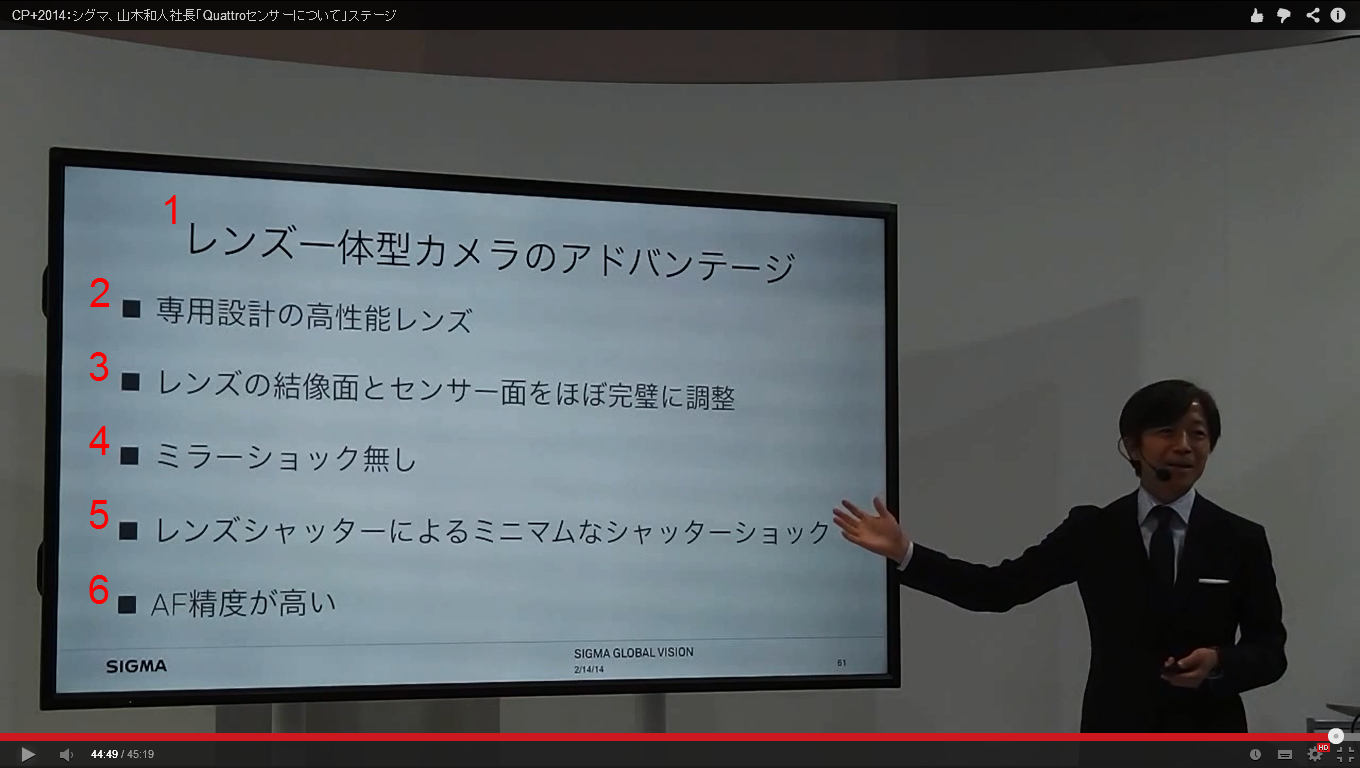

- This is the last page of the lecture.

- Let me tell you advantages of the fixed-lens cameras.

- Of course interchangeable-lens camera is wonderful. SIGMA has been insisting for long time that changing a lens can bring a transformation to your photo. In that sense, system-camera is excellent by itself.

- But it is also clear that there are lot of advantages of the fixed-lens cameras.

- (page-61 2) Dedicated lens has to be designed for that camera. This is easy to understand.

- (page-61 3) Image sensor can be aligned perfectly for optical axis and imaging plane. Assembly line do the alignment literally one by by one.

- In case interchangeable-lens camera body, image sensor is aligned for the flange face (mounting plane).

- In case interchangeable lens, optical axis is strictly aligned for the mounting plane.

- For interchangeable-lens camera system, the rigidity of mounting is definitely crucial.

- That is the reason why SIGMA lens product is using brass for all mounting related parts.

- No Aluminum. Only because mechanical accuracy of mounting is so crucial.

- The higher resolution a camera has, the more important mechanical accuracy of mounting is. Any camera vendors are really struggling on it.

- In any case, interchangeable-lens camera system totally depends on its mounting mechanical accuracy, and particularly in that sense, it always has to take a backseat to fixed-lens camera.

- Fixed-lens camera can always guarantee the perfect resolution from center to corner. This is one of the nicest features of fixed-lens cameras.

- (page-61 4) No Mirror shock. Higher resolution SLR camera is getting more serious issue coming from mirror shock.

- (page-61 5) Minimum shutter shock with a small lens-shutter.

- interchangeable-lens camera has focal plane shutter, and it causes bigger shutter shock than lens-shutter.

- (page-61 6) Higher auto-focus accuracy. This is true for mirror-less cameras by the same reason. In case DSLR camera, auto-focus sensor can perform only in the mirror reflected light, that means AF sensor is placed away from the imaging sensor. Mirror-less and fixed-lens camera perform auto-focus on the imaging plane itself, so accuracy of AF is better.

- Because SIGMA camera does not have frilled automatic functions, it has been criticized as hard to use. Finding complaints like “I bought SIGMA but sold it immediately”, on twitter or whatever, is very heartbreaking to me.

- Yes I have to agree that it would be difficult to handle, in a sense.

- But I would like to insist strongly that those advantages contributes well for taking truly high resolution image, therefore SIGMA dp is the easiest camera which makes possible for you to shoot high resolution photographs, I'm definitely confident about that.

- I'm actually using it, and realizing the advantages of dp. DSLR mirror shock always makes difficult for me to have accurate focus without image shaking.

- I'm not a talented photographer, so I'm always struggling, but with dp, I have higher chance to take good photographs. It makes me take really decent images.

- SIGMA dp might be one of the most difficult camera to handle. But it is also the easiest camera for taking high resolution photographs. That is the conclusion of the lecture today.

|

| page-61 |

- Advantages of the fixed-lens camera

- Dedicated high performance lens

- Imaging plane and sensor plane are perfectly synchronized, one by one.

- No mirror-shock

- Minimum shutter shock with lens-shutter

- Higher auto-focus accuracy

[Page-62]

- Thank you very much for your kind attention.

|

| page-62 |

- Thank you very much for your kind attention.

登録:

投稿 (Atom)